EVL Overview

ETL (Extract–Transform–Load) system

ETL processing usually consists of three main parts

- ETL itself (ETL jobs) – to process data

- Orchestration (ETL workflows) – to manage ETL jobs, handle job consequences, await file delivery, provide information about processing via e-mail or SNMP traps, etc.

- Scheduling – to fire ETL workflows at give time in a given day

Quite often is Orchestration and Scheduling named together as Scheduler, but let’s distinguish these two parts of ETL system to follow Unix Philosophy: “do one thing, and do it well”.

DAG = Directed Acyclic Graph

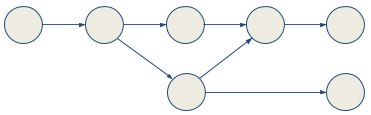

Either ETL jobs or ETL workflows consists of one or more oriented acyclic graphs, with the following meaning:

| jobs | workflows | |

|---|---|---|

| vertices | data modifying components | jobs, other workflows |

| edges | data flows | successor |

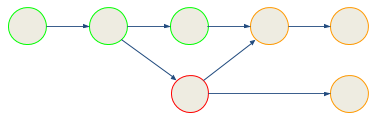

The main difference in approach, between jobs and workflows, is:

- When ETL job fails, whole must be restarted.

- When ETL workflow fails, can be either restarted from the beginning or continue from last failure(s).

So an ETL workflow like this:

might be restarted from the red job. Green (i.e. successful ones will be skipped.

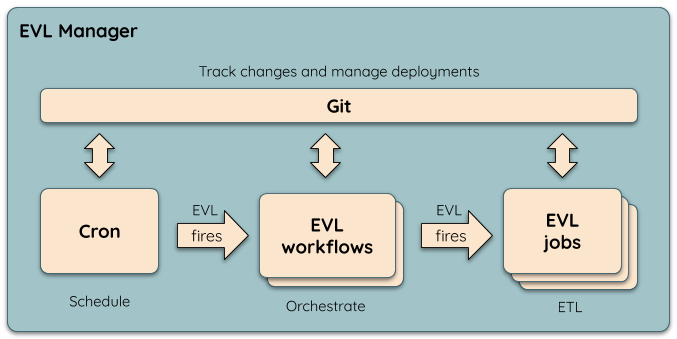

EVL parts

Considering above theory, EVL splits ETL system into three main entities:

All three entities are supposed to be tracked by Git or any other version control system.